1 min read

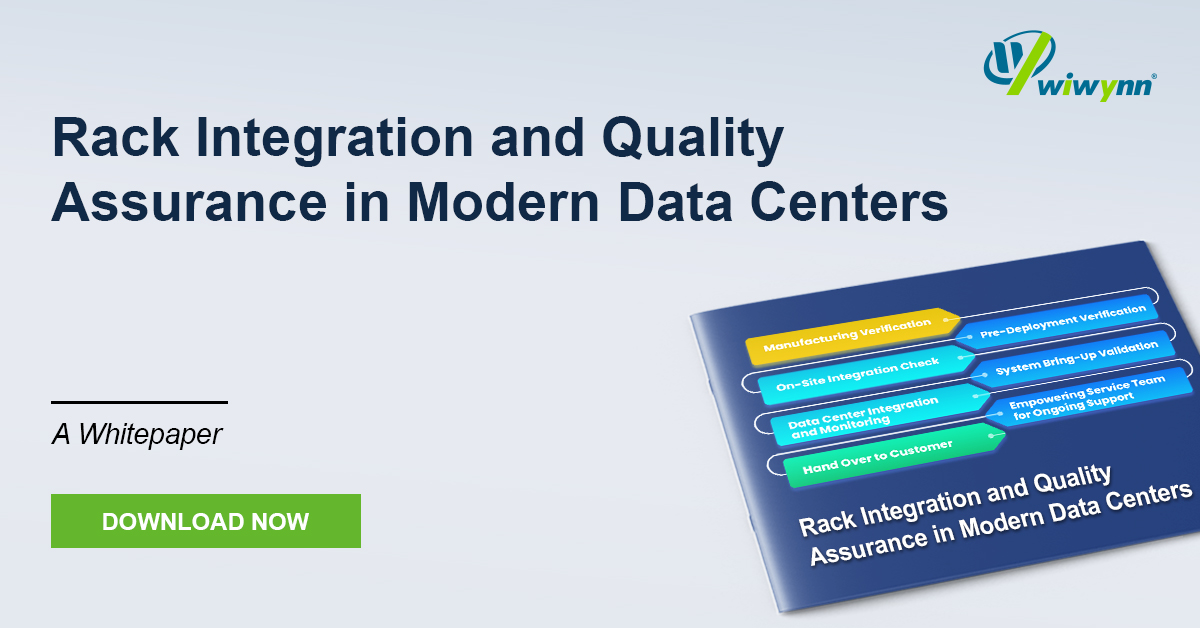

White Paper: Rack Integration and Quality Assurance in Modern Data Centers

The evolution of modern data centers demands a shift from isolated hardware delivery to seamless infrastructure integration. This whitepaper...

1 min read

Press

Updated on July 11, 2025

As thermal design power (TDP) for modern processors such as CPUs, GPUs, and TPUs exceeds 1 kW, traditional air cooling methods are proving insufficient. Liquid cooling, particularly using cold plates, has emerged as a superior solution. This paper from Wiwynn introduces an advanced cold plate design that integrates in-package liquid cooling with jet impingement techniques to minimize junction-to-liquid thermal resistance, eliminating multiple layers of thermal interface material (TIM) and improving heat transfer efficiency.

The proposed integrated cold plate was evaluated through numerical simulations and experimental setups. Simulation results show that these integrated cold plates outperform traditional designs, reducing thermal resistance by 8%. Additional fluidic channels within the chip package, along with direct liquid cooling, further enhance performance by up to 25.7%. The jet impingement cold plate design significantly improves thermal resistance by 20–48% compared to traditional cold plates. When combined with the integrated cold plate, the impingement design reduces thermal resistance by up to 53%.

These advancements demonstrate that integrated impingement cold plates offer significant improvements in thermal management for high TDP processors, making them a promising technology for data centers and AI applications.

Register to Download the whitepaper!

1 min read

The evolution of modern data centers demands a shift from isolated hardware delivery to seamless infrastructure integration. This whitepaper...

1 min read

As AI and High-Performance Computing (HPC) push rack power densities beyond 250 kW and localized heat fluxes past the 500 W/cm² threshold,...

1 min read

This paper introduces a paradigm shift in storage architecture designed to overcome the CPU-centric data path bottlenecks in modern AI workloads. By...