1 min read

White Paper: GPU-Initiated, Liquid-Cooled, Ultra-High-Density Storage for Next-Gen AI

This paper introduces a paradigm shift in storage architecture designed to overcome the CPU-centric data path bottlenecks in modern AI workloads. By...

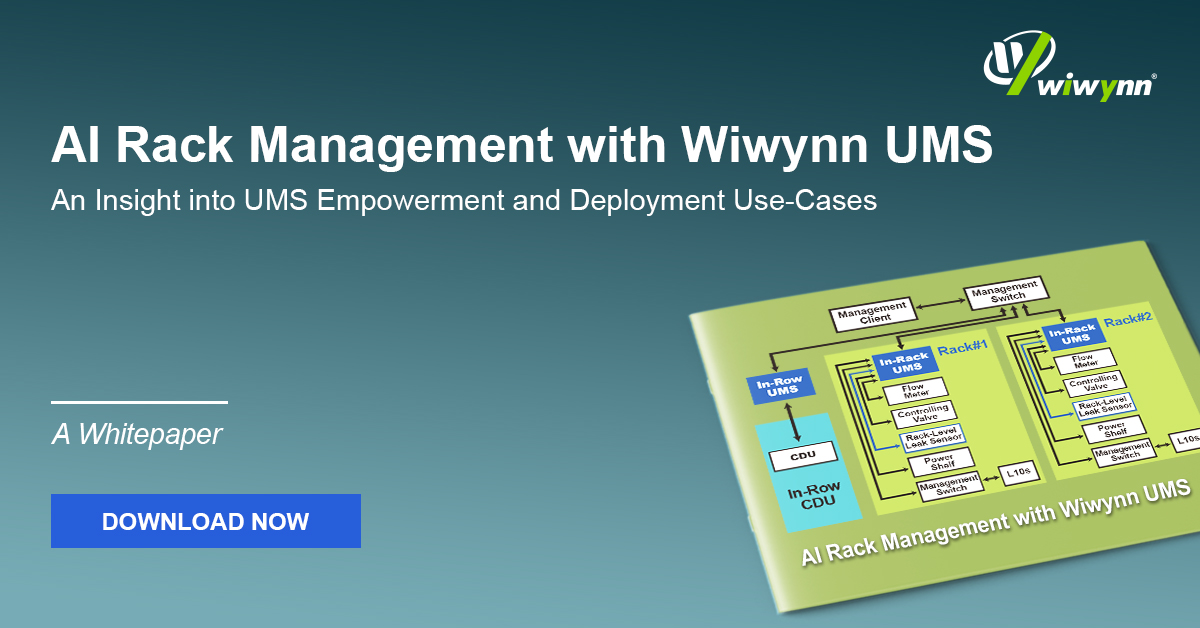

This paper discusses the rapid expansion of AI workloads and the resulting transformation in data center infrastructure requirements. Traditional air-cooling systems are becoming less effective due to the increased power density of server racks. To address this challenge, the paper presents Wiwynn's Universal Management System (UMS) as a solution for managing AI server racks and Direct Liquid Cooling (DLC) infrastructure.

The UMS is designed to provide precise, scalable, and intelligent cooling and monitoring for high-density AI racks. It features a modular hardware design, a dual deployment architecture, and key functionalities such as multi-layer leakage detection, cooling facility management, sensor monitoring, and emergency power management.

The paper also includes real-world deployment examples showcasing the versatility and reliability of UMS in various AI and DLC environments. Future directions for UMS involve expanding its capabilities with AI-based algorithms for anomaly detection, workload-aware cooling optimization, and predictive fault isolation.

Discover how Wiwynn’s UMS unifies AI rack and Direct Liquid Cooling (DLC) management with precise monitoring, multi-layer leak detection, and intelligent power control. Download the full white paper to explore real-world deployments and the next step toward AI-driven, resilient data center infrastructure.

1 min read

This paper introduces a paradigm shift in storage architecture designed to overcome the CPU-centric data path bottlenecks in modern AI workloads. By...

1 min read

This paper explores a disaggregated key-value (KV) storage architecture designed to efficiently offload KV cache tensors for generative AI workloads.

1 min read

This paper explores an advanced framework designed to automate the extraction of important attributes from unstructured part datasheets. By...