1 min read

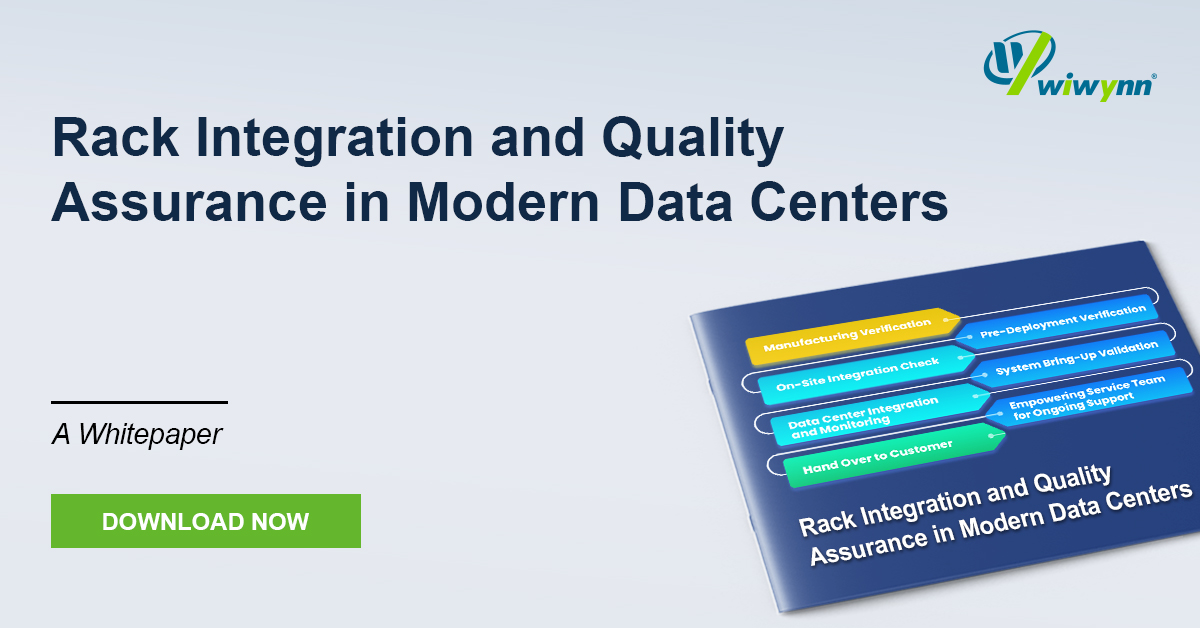

White Paper: Rack Integration and Quality Assurance in Modern Data Centers

The evolution of modern data centers demands a shift from isolated hardware delivery to seamless infrastructure integration. This whitepaper...

1 min read

Press

Updated on August 4, 2025

AI and large-language-model clusters are straining the limits of traditional fat-tree and star networks. When tens of billions of parameters move across 224 Gbps links, the switch tiers, cable count, and power draw all climb sharply. Our newest white paper explains how a 3D Torus rack-level fabric trims hop counts, shortens cable runs, and reduces switch silicon while preserving the low latency and massive bandwidth required by modern XPU fleets.

Inside the paper you will find head-to-head benchmarks of 3D Torus against Fully-Connected, Tree, and Dragonfly designs under tensor- and pipeline-parallel training. Detailed latency heat maps, watt-per-teraflop savings, and bill-of-materials comparisons show why a small-diameter torus consistently outperforms sprawling Clos fabrics. The guide also covers 224 Gbps SerDes layout, efficient rack wiring, and fault-tolerant routing so that architects can scale cleanly from a single rack to exascale pods.

Do not let yesterday’s network architecture throttle tomorrow’s models. Download the full white paper today to blueprint a 3D Torus topology that accelerates training, lowers power budgets, and cuts total cost of ownership for your AI infrastructure.

1 min read

The evolution of modern data centers demands a shift from isolated hardware delivery to seamless infrastructure integration. This whitepaper...

1 min read

As AI and High-Performance Computing (HPC) push rack power densities beyond 250 kW and localized heat fluxes past the 500 W/cm² threshold,...

1 min read

This paper introduces a paradigm shift in storage architecture designed to overcome the CPU-centric data path bottlenecks in modern AI workloads. By...