Join Wiwynn at NVIDIA GTC 2026

Create AI Beyond Limits

As AI adoption accelerates across industries, building scalable, efficient, and high performance infrastructure has never been more critical. At NVIDIA GTC 2026, Wiwynn, together with Wistron, showcases how we enable next generation AI data centers—combining compute, storage, advanced cooling solutions, and system level integration—designed to support higher power density and power the most demanding AI workloads.

What We’re Showcasing

At NVIDIA GTC 2026, Wiwynn and Wistron present a comprehensive portfolio of AI servers, networking, and thermal solutions, addressing AI deployments from hyperscale to enterprise environments.

AI Servers and Networking at Every Scale

• NVIDIA Vera Rubin NVL72: An AI supercomputer built for next-generation AI through extreme co-design. It unifies six purpose-built chips—Rubin GPUs, Vera CPUs, NVLink 6, ConnectX-9, BlueField-4, and Spectrum-X—into a single rack-scale system optimized that delivers AI training with one fourth the GPUs and AI inference at up to 10 times the throughput per watt and one tenth the cost per million tokens versus NVIDIA Blackwell.

• NVIDIA HGX™ Rubin NVL8: Scales AI factories for enterprise, accelerating AI and high-performance computing (HPC) workloads.

• Unveiling a comprehensive networking product line: including AI optimized network switches designed for high bandwidth, low latency workloads for AI Networking Platform.

Storage‑Next Architecture

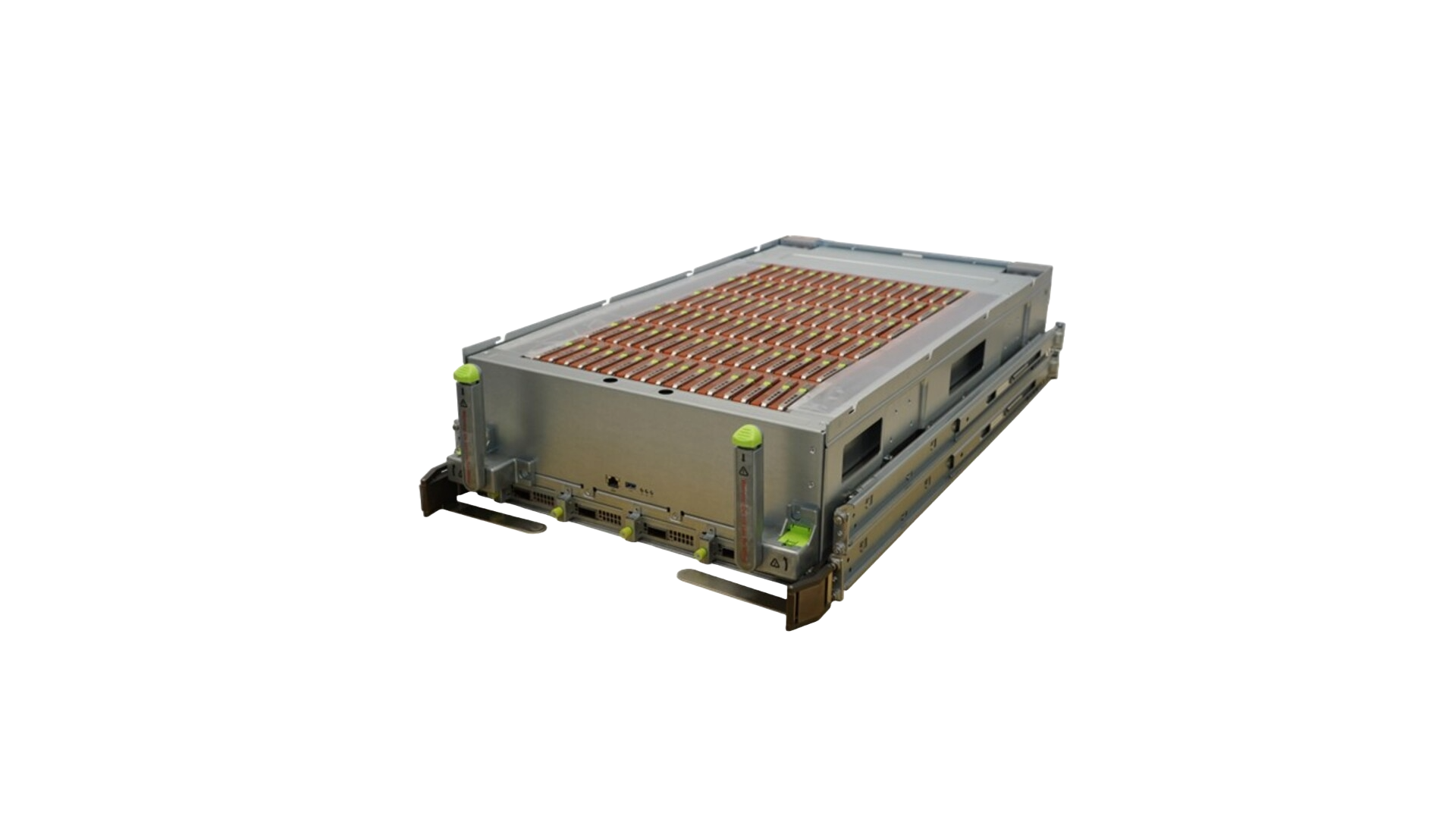

Part of NVIDIA’s Storage Next initiative, the GPU initiated, 100% liquid cooled storage architecture leverages NVIDIA SCADA to directly orchestrate I/O across a 96 drive NVMe array—pushing toward 100M IOPS per GPU with sub millisecond tail latencies for GNN, LLM inference, and RAG. Integrated telemetry and leak detection enable hot-serviceability and petabyte class density.

Advancing Thermal Innovation

• Live demonstrations of innovative thermal solutions designed to support high power GPUs and dense system architectures.

• Showcasing how advanced cooling technologies enable higher rack density, improved energy efficiency, and long term system reliability in AI data centers.

Building an AI Data Center Ecosystem

Beyond individual systems, Wiwynn and Wistron focus on building a complete AI data center ecosystem—from foundational infrastructure to future ready operations.

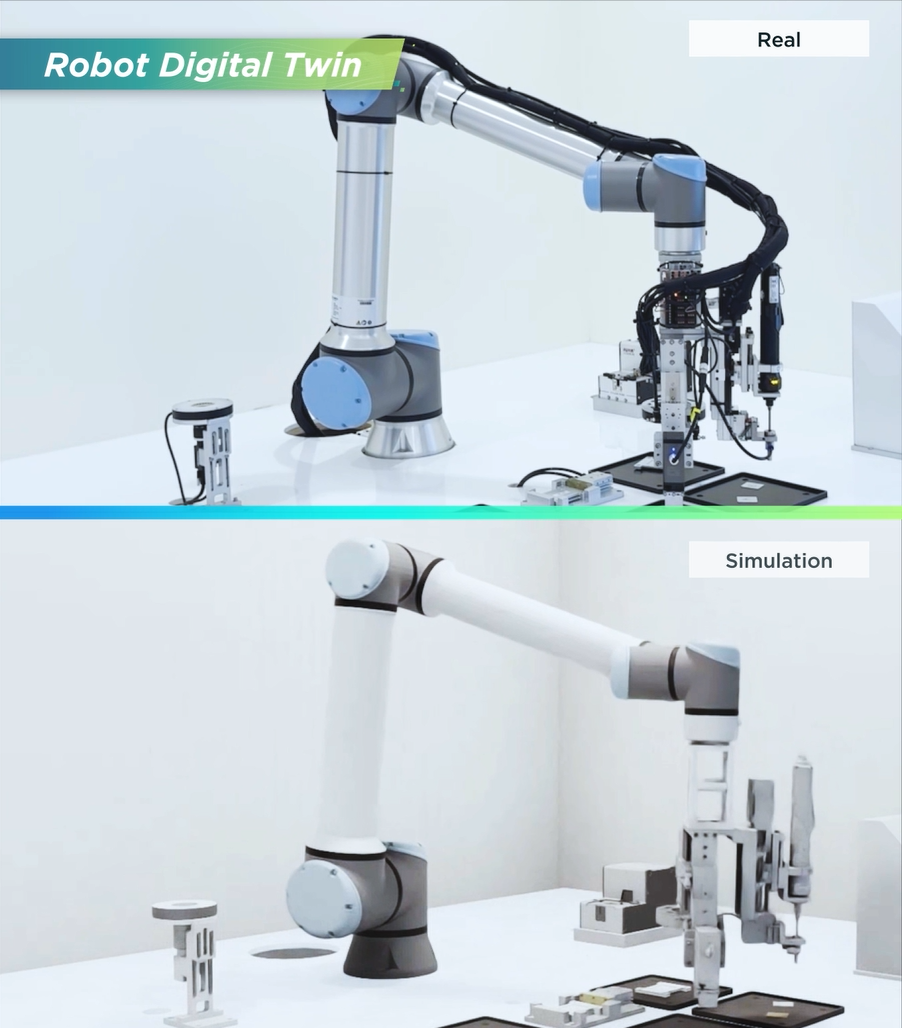

Digital Twin and Intelligent Manufacturing

Building a complete ecosystem via NVIDIA Omniverse™:

• Demonstrating how NVIDIA Omniverse™ enables next generation manufacturing.

• Interactive digital twins: factory planning, validation, and optimization.

• Immersive Omniverse training and live thermal evaluation.

• Revealing automated manufacturing lines optimized through digital twin environments.

Meet Us at NVIDIA GTC 2026

Whether you are building your first AI cluster or scaling a full AI data center, Wiwynn and Wistron help turn ambition into reality. Visit our booth #1121 to speak with our experts, explore solutions, and learn how our collaboration enables AI innovation at scale.

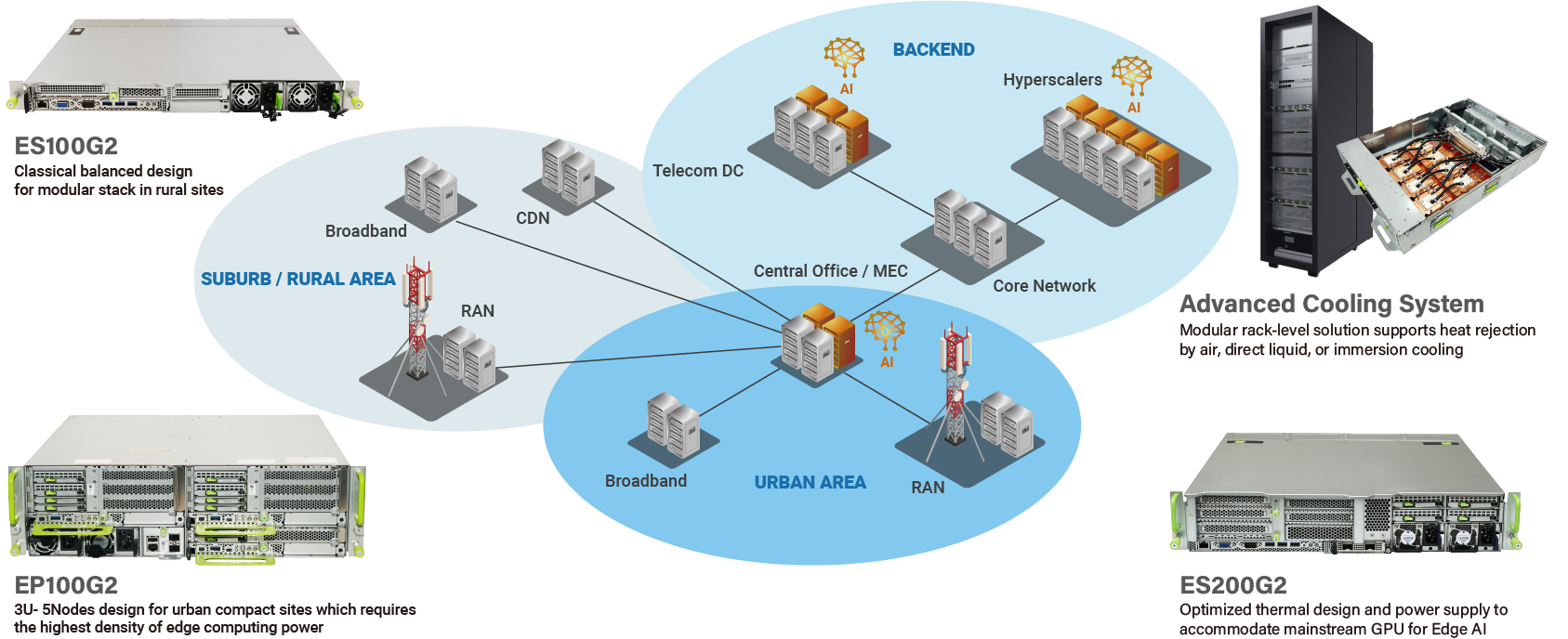

Proved edge platform for versatile applications

Speech

Mar 19 Thu,

10:00-10:40 a.m. PT

Location: Marriott — Willow Glen I-III (L2)

Build an AI-Ready Data Center: Practical Insights from YTL, Wiwynn, and NVIDIA

This session reveals how YTL partnered with Wiwynn and NVIDIA to build an AI-ready data center. We’ll walk through the NVIS deployment methodology—covering site qualification, high-density GPU rack integration, and power/cooling validation under real-world stress. You’ll also learn how to implement end-to-end observability and manage liquid-cooling risks. Finally, we share benchmarking results using MLPerf and AI workloads to confirm the environment meets AI-ready standards.

|

Ted Pang

Sr. Director, Wiwynn Corp.

|

Poster Showcase

Autonomous AI Agent for End-to-End Component Data Extraction

Wiwynn’s AI Agent leverages a context-aware RAG pipeline to transform unstructured engineering docs into structured attributes. Hybrid cloud deployment delivers 6× faster processing and an 83% manual effort reduction, ensuring efficient, production-ready data management.

Venue

San Jose Convention Center

Booth #1121

Edge & AI Solutions

ES100G2

The Advanced 1U Short-depth Platform for Edge AI Inference and vRAN/NFV

Read Datasheet

- 5th Gen Intel®Xeon® Processors with Integrated AI Acceleration

- Optimized vRAN/NFV Platform in Far Edge

- AI Inference for Versatile Edge Applications

- NGC-Ready, NVQual-Certified, Wind River Criteria for Enhanced Performance

- Carrier-Grade and NEBS Level 3 Compliant

ES200G2

The 2U Short-depth Platform for AI Inference/Training and All-in-one vRAN Applications

Read Datasheet

- 5th Gen Intel® Xeon® Processors and Add-on AI Acceleration

- AI Inference/Training and Diverse Applications

- All-in-one vRAN Platform in Far Edge

- NGC-Ready, NVQual-Certified, Wind River Criteria for Enhanced Performance

- Carrier-Grade and NEBS Level 3 Compliant

EP100G2

All-in-one 5G Platform for CU/DU/5GC/MEC and Private Networks

Read Datasheet

- 4th Gen Intel® Xeon® Scalable Processors

- Flexible 1U/2U Sled Designs for Versatile Applications

- Intel® QAT and AVX Enabled for Faster 5G/Edge Applications

- Short-Depth Form Factor for Diverse Edge Locations

- Pooled Power and Chassis Controller for Power Efficiency and Management

Vertical Use Cases with Partner Collaborations

Leveraging own proficiency in server design and manufacturing, Wiwynn actively working with eco-system partners to create synergies of combining expertise from hardware, software, and solution integration.

For different industry vertical applications, Wiwynn’s open RAN solution is designed to reduce infrastructure complexity and meet the field environmental constraints, and its short-depth form factor is perfect for diverse edge locations. With Wiwynn optimized solutions, you can realize 5G and create more possibilities, which people could never imagine before.