1 min read

White Paper: GPU-Initiated, Liquid-Cooled, Ultra-High-Density Storage for Next-Gen AI

This paper introduces a paradigm shift in storage architecture designed to overcome the CPU-centric data path bottlenecks in modern AI workloads. By...

1 min read

Press

Updated on July 19, 2022

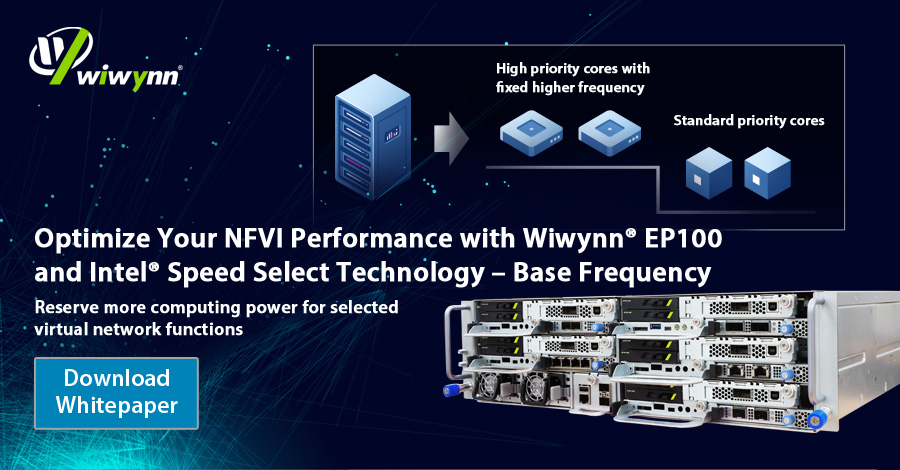

Wiwynn® EP100 design is optimized for CoSPs’ NFVI with flexibility, serviceability and performance. CoSPs can utilize their existing racks with flexible configuration of the 1U half-width single socket sled.

With the powerful and optimized 2nd generation Intel® Xeon® Scalable processor family, EP100 enables high speed packet forwarding, which is critical to NFVI. By adopting the new NFVI specialized feature called Intel® Speed Select Technology – Base Frequency (Intel® SST-BF), EP100 boosts performance of targeted applications essential for some VNFs.

With supports from Industrial Technology Research Institute (ITRI) and Intel, we utilized OPNFV Yardstick/Network Services Benchmarking (NSB) framework to compare performance of Wiwynn® EP100 with Intel® SST-BF enabled and disabled in this whitepaper. The Layer 2 forwarding tests were run on Intel® Xeon® Gold 6252N processor for performance benchmark of Open vSwitch (OVS) and the Data Plane Development Kit (DPDK). This testing process not only helps to identify Wiwynn® EP100’s performance under different CPU frequency configurations, but also assists CoSPs to develop a common reference set of performance benchmarks for their applications.

Leave your contact information to download the whitepaper!

1 min read

This paper introduces a paradigm shift in storage architecture designed to overcome the CPU-centric data path bottlenecks in modern AI workloads. By...

1 min read

This paper explores a disaggregated key-value (KV) storage architecture designed to efficiently offload KV cache tensors for generative AI workloads.

1 min read

This paper explores an advanced framework designed to automate the extraction of important attributes from unstructured part datasheets. By...