1 min read

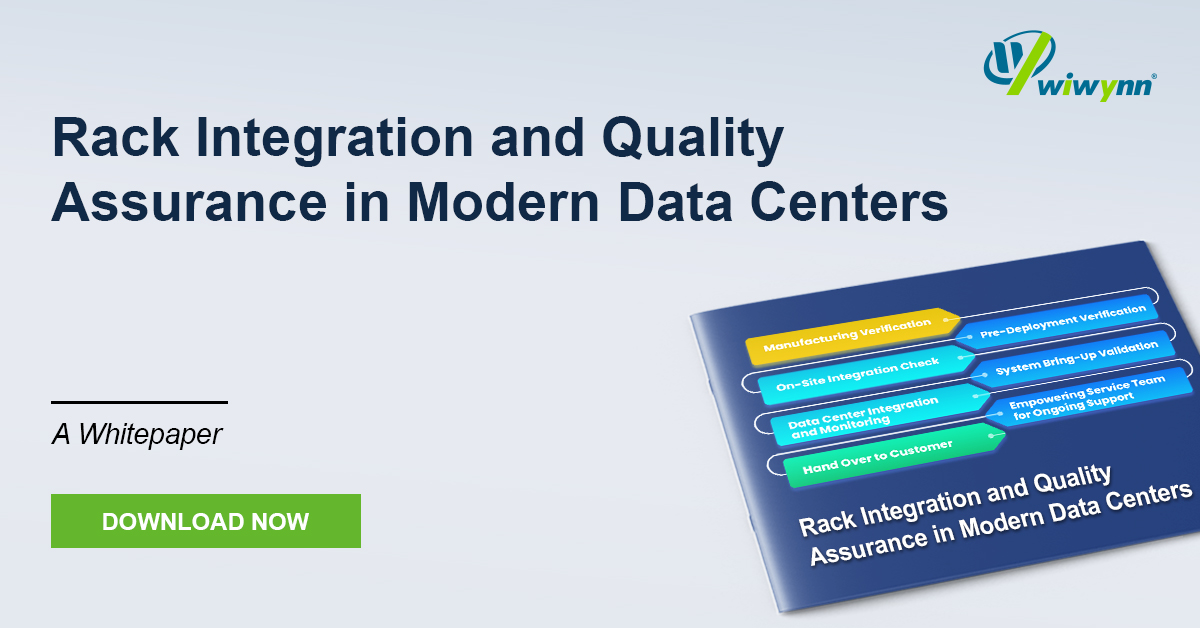

White Paper: Rack Integration and Quality Assurance in Modern Data Centers

The evolution of modern data centers demands a shift from isolated hardware delivery to seamless infrastructure integration. This whitepaper...

With the ever-growing demand for faster and more reliable transmissions, data centers need to be consistently aware of different architectures and applications such as the 5G network architecture, edge computing advancement, etc.

In this whitepaper, we will be providing ideas, design and an actual system created for edge computing, 5G network and AI inference. These aim to solve problems such as drastic environmental conditions, different application areas, inflexible design.

Based on this, we introduce ES200, a system with modularized design that can adapt to different applications and edge computing, and, with those, the accompanying design ideas for data centers.

Leave your contact information to download the whitepaper!

1 min read

The evolution of modern data centers demands a shift from isolated hardware delivery to seamless infrastructure integration. This whitepaper...

1 min read

As AI and High-Performance Computing (HPC) push rack power densities beyond 250 kW and localized heat fluxes past the 500 W/cm² threshold,...

1 min read

This paper introduces a paradigm shift in storage architecture designed to overcome the CPU-centric data path bottlenecks in modern AI workloads. By...