1 min read

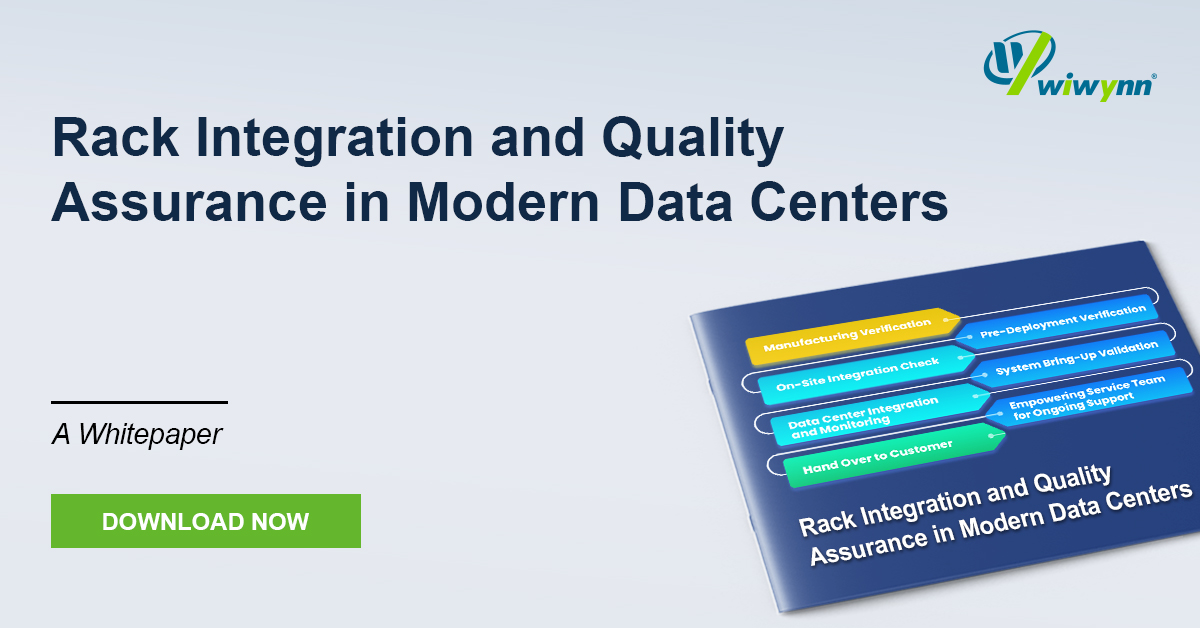

White Paper: Rack Integration and Quality Assurance in Modern Data Centers

The evolution of modern data centers demands a shift from isolated hardware delivery to seamless infrastructure integration. This whitepaper...

1 min read

Press

March 17, 2026

This paper introduces a paradigm shift in storage architecture designed to overcome the CPU-centric data path bottlenecks in modern AI workloads. By combining NVIDIA SCADA (Scaled Accelerated Data Access) with a 100% liquid-cooled, fanless chassis housing 96 E3.S NVMe drives, we have created an ultra-high-density storage solution.

Our innovative approach bypasses the CPU for the control path, allowing 100,000+ concurrent GPU threads to initiate their own data requests and approach a theoretical limit of 100 million IOPS. This fanless environment eliminates mechanical vibrations, ensuring stable tail latencies and protecting drive longevity, while optimizing power for compute rather than air movement.

See how our GPU-initiated storage and advanced thermal management transform data centers by reducing physical footprints by up to 75% and lowering total Data Center OpEx by 40-50%. By streamlining data access for complex RAG, vector databases, and Graph Neural Networks (GNNs), we enable AI enterprises to minimize Time-To-First-Token (TTFT) and maximize GPU cluster utilization. Download the whitepaper to explore our system architecture and implement a silent, ultra-high-performance accelerator for your next-generation AI ecosystem.

1 min read

The evolution of modern data centers demands a shift from isolated hardware delivery to seamless infrastructure integration. This whitepaper...

1 min read

As AI and High-Performance Computing (HPC) push rack power densities beyond 250 kW and localized heat fluxes past the 500 W/cm² threshold,...

1 min read

This paper introduces a paradigm shift in storage architecture designed to overcome the CPU-centric data path bottlenecks in modern AI workloads. By...