1 min read

White Paper: GPU-Initiated, Liquid-Cooled, Ultra-High-Density Storage for Next-Gen AI

This paper introduces a paradigm shift in storage architecture designed to overcome the CPU-centric data path bottlenecks in modern AI workloads. By...

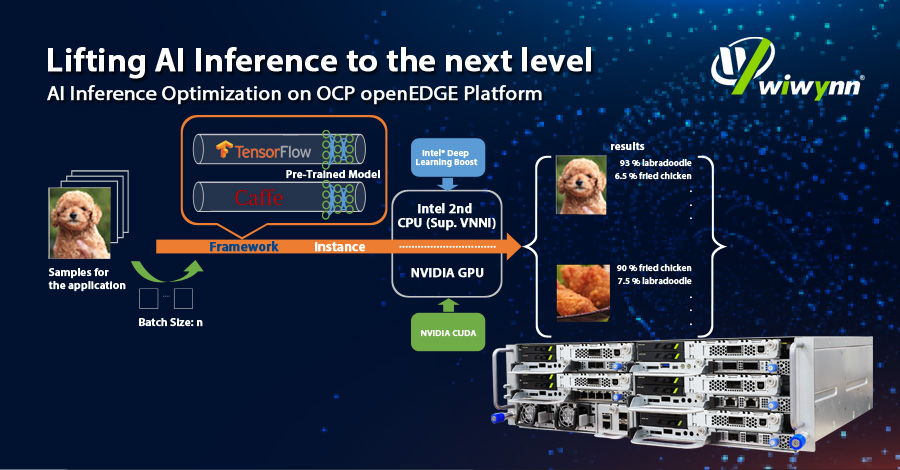

Looking for Edge AI Server for your new applications? What’s the most optimized solution? What parameters should take into consideration? Come to check Wiwynn’s latest whitepaper on AI inference optimization on OCP openEDGE platform.

See how Wiwynn EP100 assists you to catch up with the thriving edge application era and diverse AI inference workloads with powerful CPU and GPU inference acceleration!

Leave your contact information to download the whitepaper!

1 min read

This paper introduces a paradigm shift in storage architecture designed to overcome the CPU-centric data path bottlenecks in modern AI workloads. By...

1 min read

This paper explores a disaggregated key-value (KV) storage architecture designed to efficiently offload KV cache tensors for generative AI workloads.

1 min read

This paper explores an advanced framework designed to automate the extraction of important attributes from unstructured part datasheets. By...