1 min read

White Paper: GPU-Initiated, Liquid-Cooled, Ultra-High-Density Storage for Next-Gen AI

This paper introduces a paradigm shift in storage architecture designed to overcome the CPU-centric data path bottlenecks in modern AI workloads. By...

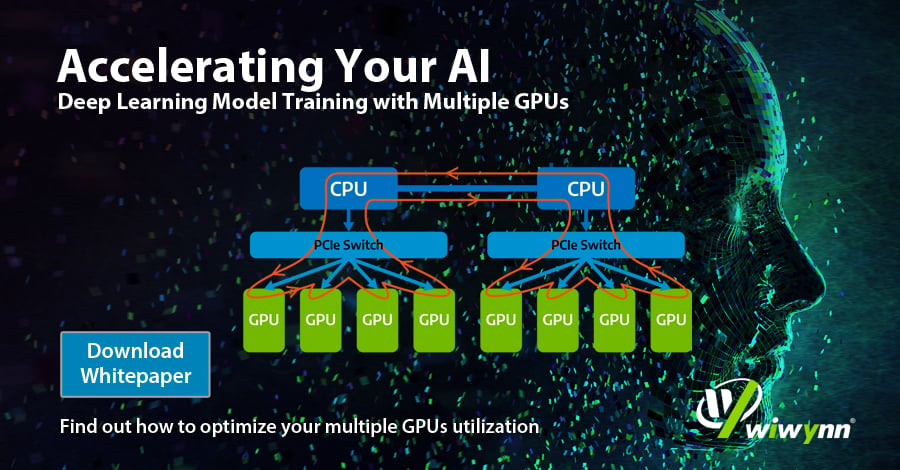

Deep Learning has shown remarkable results in many fields.Instant parameter adjustment is substantial for a successful deep learning model. To accelerate training process of deep learning, many studies are designed to use distributed deep learning systems with multiple GPUs.

Leave your contact information to download the whitepaper.

1 min read

This paper introduces a paradigm shift in storage architecture designed to overcome the CPU-centric data path bottlenecks in modern AI workloads. By...

1 min read

This paper explores a disaggregated key-value (KV) storage architecture designed to efficiently offload KV cache tensors for generative AI workloads.

1 min read

This paper explores an advanced framework designed to automate the extraction of important attributes from unstructured part datasheets. By...