1 min read

White Paper: GPU-Initiated, Liquid-Cooled, Ultra-High-Density Storage for Next-Gen AI

This paper introduces a paradigm shift in storage architecture designed to overcome the CPU-centric data path bottlenecks in modern AI workloads. By...

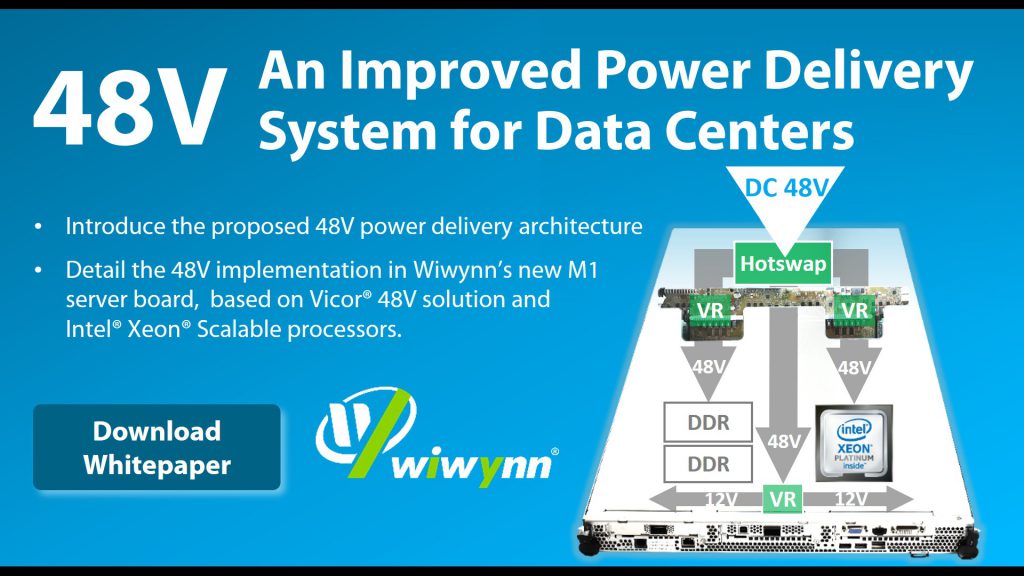

Wiwynn announced the availability of the whitepaper on 48V server platform based on Vicor’s 48V Direct-to-PoL (Point-of-Load) solution and Intel® Xeon® Scalable processors.

This paper will discuss the challenges of 12V power delivery systems in data centers, introduce the proposed 48V power delivery architecture, and detail the 48V implementation in Wiwynn’s new M1 server board.

Leave your contact information to download the whitepaper.

1 min read

This paper introduces a paradigm shift in storage architecture designed to overcome the CPU-centric data path bottlenecks in modern AI workloads. By...

1 min read

This paper explores a disaggregated key-value (KV) storage architecture designed to efficiently offload KV cache tensors for generative AI workloads.

1 min read

This paper explores an advanced framework designed to automate the extraction of important attributes from unstructured part datasheets. By...