SV7100G4

Highly Modularized Multi-node Platform designed for Easy Serviceability

Features

OCP Accepted Multi-Node Server with High Scalability

SV7100G4 is based on the specification of OCP Yosemite V3 that is built for Open Rack V2 architecture. It is a highly modularized system where you can fit server boards, expansion boards, baseboard, power modules and cooling fans into a 4OU cubby chassis. The cubby can carry 3 sleds with up to 4 blades in each sled, totaling to 12 blades, making it a highly scalable system.

Power Efficiency and Inference Capability for Specific Workloads

SV7100G4 utilizes Intel’s 3rd generation Intel® Xeon® scalable processors that respectively supports power and core count of up to 88W and 26 cores. The combination is power-efficient for workload of running web services and, on top of that, it can do AI inference/ML works with the support of Enhanced Intel® Speed Select Technology, Enhanced Intel® Deep Learning Boost with bfloat16 and AVX-512.

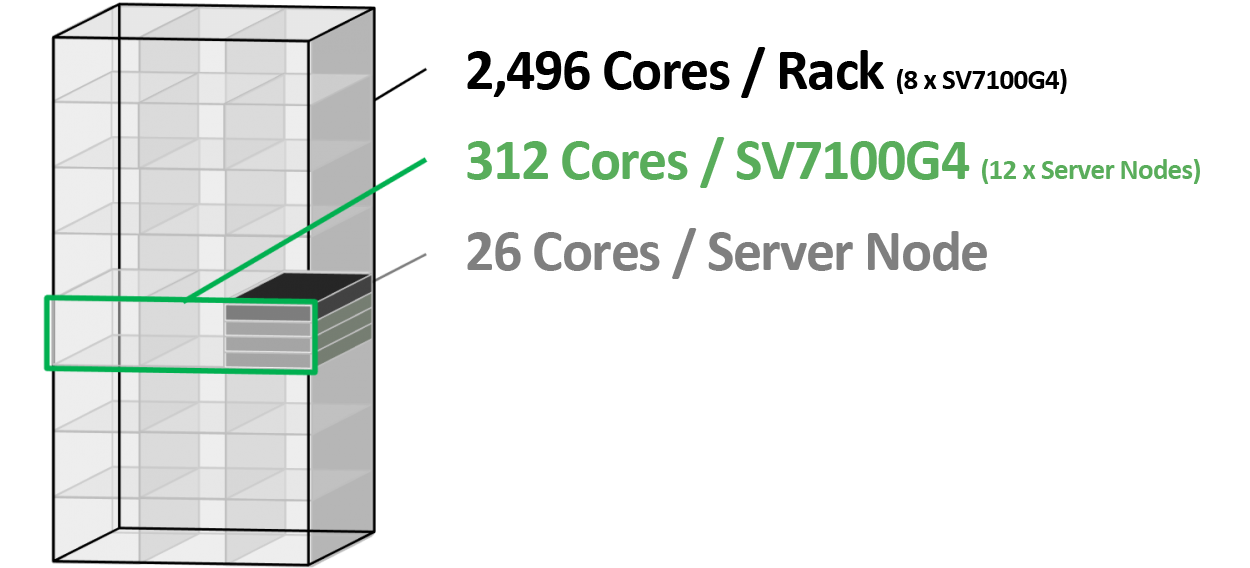

High Density and Cost-Efficient Compute Rack

SV7100G4 is a multi-node server platform that can host four 1U blades in a sled that fits into the OCP cubby chassis. If you intend to fill up the Open Rack with 1OU blades, a 4OU system can carry 12 blades, the entire rack can accommodate 8 systems, and therefore up to 96 servers in a rack, which makes it an ideal high-density, 2,496-core computing rack for hyperscale datacenters.

Advanced Front-Access Blade Design for Easy Serviceability

SV7100G4’s sleds and blades are front-access designed for easy accessibility and maintainability. All blades in a sled share one multi-host OCP NIC 3.0, eliminating 3 times network cabling for operators to easily maintain the system. The horizontal sled design can also minimize maintenance time when compared to traditional blade servers. In addition, the dedicated fan zone deployment can achieve system availability.

Tech Spec

| Form Factor, Processor, Memory and Chipset | |

| Form Factor | 1OU, one third width of OCP vCubby chassis |

| Processor | Intel® 3rd Generation Intel® Xeon® Scalable Processors |

| Processor Sockets | One per blade (4 server blades in a 1OU sled) |

| Chipsets | Intel® C621 series |

| System Bus | Intel® UltraPath Interconnect; 10.4GT/s |

| Memory | Up to 1.92TB, DDR4 up to 2666-3200MT/s, 6 RDIMM slots per blade |

| Storage and I/O | |

| Storage | One M.2 solid-state drives in 2280 or 22110 form factors |

| Expansion Slots | One PCIex16 and one PCIex8 slots (Reserved) |

| Front I/O | Power button x 1 Status LEDs x 2 |

| LAN | Multi-host 100GbE OCP 3.0 NIC card (shared by 4 nodes) |

| BMC Chipset | ASPEED AST2520 |

| Remote Management | NCSI port via multi-host OCP 3.0 NIC OpenBMC based SSH remote management |

| Physical Specifications | |

| Power Consumption | 130W ~ 165W (Max) |

| Dimensions (mm) | 185 (W) x 781 (D) x 173 (H) |

| Weight | 19.5kg |

| Chassis Specifications | |

| PSU | Centralized 12.5V DC bus bar |

| Fan | Four 8030 fan modules (Per Sled), with one fan failure redundancy |

| Dimensions | 537 (W) * 801 (D) * 191 (H) |

Download

| Category | File Title | Release Date | Actions |

| Datasheet | SV7100G4 Datasheet | 2021/09/17 | Download |